Stream Processing for Everyone with SQL and Apache Flink

Where did we come from?

With the 0.9.0-milestone1 release, Apache Flink added an API to process relational data with SQL-like expressions called the Table API. The central concept of this API is a Table, a structured data set or stream on which relational operations can be applied. The Table API is tightly integrated with the DataSet and DataStream API. A Table can be easily created from a DataSet or DataStream and can also be converted back into a DataSet or DataStream as the following example shows

从0.9开始,引入Table API来支持关系型操作,

val execEnv = ExecutionEnvironment.getExecutionEnvironment<br/>

val tableEnv = TableEnvironment.getTableEnvironment(execEnv)

// obtain a DataSet from somewhere<br/>

val tempData: DataSet[(String, Long, Double)] =

// convert the DataSet to a Table<br/>

val tempTable: Table = tempData.toTable(tableEnv, 'location, 'time, 'tempF)<br/>

// compute your result<br/>

val avgTempCTable: Table = tempTable<br/>

.where('location.like("room%"))<br/>

.select(<br/>

('time / (3600 * 24)) as 'day,<br/>

'Location as 'room,<br/>

(('tempF - 32) * 0.556) as 'tempC<br/>

)<br/>

.groupBy('day, 'room)<br/>

.select('day, 'room, 'tempC.avg as 'avgTempC)<br/>

// convert result Table back into a DataSet and print it<br/>

avgTempCTable.toDataSet[Row].print()

可以看到可以很简单的把dataset转换为Table,指定其元数据即可

然后对于table就可以进行各种关系型操作,

最后还可以把Table再转换回dataset

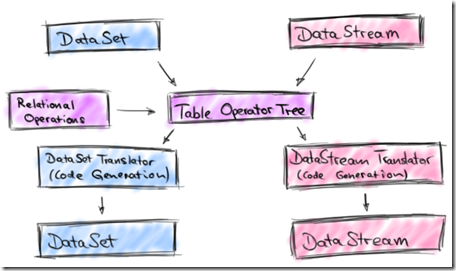

Although the example shows Scala code, there is also an equivalent Java version of the Table API. The following picture depicts the original architecture of the Table API.

对于table的关系型操作,最终通过code generation还是会转换为dataset的逻辑

Table API joining forces with SQL

the community was also well aware of the multitude of dedicated “SQL-on-Hadoop” solutions in the open source landscape (Apache Hive, Apache Drill,Apache Impala, Apache Tajo, just to name a few).

Given these alternatives, we figured that time would be better spent improving Flink in other ways than implementing yet another SQL-on-Hadoop solution.

What we came up with was a revised architecture for a Table API that supports SQL (and Table API) queries on streaming and static data sources.

We did not want to reinvent the wheel and decided to build the new Table API on top of Apache Calcite, a popular SQL parser and optimizer framework. Apache Calcite is used by many projects including Apache Hive, Apache Drill, Cascading, and many more. Moreover, the Calcite community put SQL on streams on their roadmap which makes it a perfect fit for Flink’s SQL interface.

虽然社区已经有很多的Sql-on-Hadoop方案,flink希望把时间花在更有价值的地方,而不是再实现一套

但是当前这样的需要非常强烈,所以在revise Table API的基础上实现对SQL的支持

对于SQL的支持,借助于Calcite,并且Calcite已经把SQL on streams放在roadmap上,有希望成为streaming sql的标准

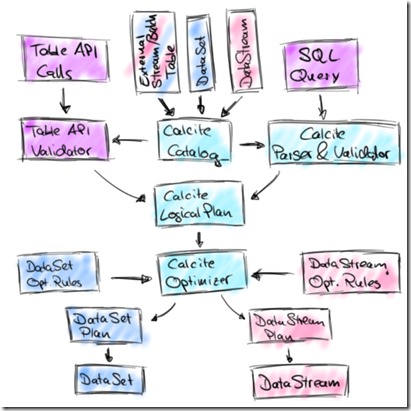

Calcite is central in the new design as the following architecture sketch shows:

The new architecture features two integrated APIs to specify relational queries, the Table API and SQL.

Queries of both APIs are validated against a catalog of registered tables and converted into Calcite’s representation for logical plans.

In this representation, stream and batch queries look exactly the same.

Next, Calcite’s cost-based optimizer applies transformation rules and optimizes the logical plans.

Depending on the nature of the sources (streaming or static) we use different rule sets.

Finally, the optimized plan is translated into a regular Flink DataStream or DataSet program. This step involves again code generation to compile relational expressions into Flink functions.

这里Table API和SQL都统一的转换为Calcite的逻辑plans,然后再通过Calcite Optimizer进行优化,最终通过code generation转换为Flink的函数

With this effort, we are adding SQL support for both streaming and static data to Flink.

However, we do not want to see this as a competing solution to dedicated, high-performance SQL-on-Hadoop solutions, such as Impala, Drill, and Hive.

Instead, we see the sweet spot of Flink’s SQL integration primarily in providing access to streaming analytics to a wider audience.

In addition, it will facilitate integrated applications that use Flink’s API’s as well as SQL while being executed on a single runtime engine

再次说明,支持SQL并不是为了再造一个专用的SQL-on-Hadoop solutions;而是为了让更多的人可以来使用Flink,说白了,这块不是当前的战略重点

How will Flink’s SQL on streams look like?

So far we discussed the motivation for and architecture of Flink’s stream SQL interface, but how will it actually look like?

// get environments<br/>

val execEnv = StreamExecutionEnvironment.getExecutionEnvironment<br/>

val tableEnv = TableEnvironment.getTableEnvironment(execEnv)

// configure Kafka connection<br/>

val kafkaProps = ...<br/>

// define a JSON encoded Kafka topic as external table<br/>

val sensorSource = new KafkaJsonSource[(String, Long, Double)](<br/>

"sensorTopic",<br/>

kafkaProps,<br/>

("location", "time", "tempF"))

// register external table<br/>

tableEnv.registerTableSource("sensorData", sensorSource)

// define query in external table<br/>

val roomSensors: Table = tableEnv.sql(<br/>

"SELECT STREAM time, location AS room, (tempF - 32) * 0.556 AS tempC " +<br/>

"FROM sensorData " +<br/>

"WHERE location LIKE 'room%'"<br/>

)

// define a JSON encoded Kafka topic as external sink<br/>

val roomSensorSink = new KafkaJsonSink(...)

// define sink for room sensor data and execute query<br/>

roomSensors.toSink(roomSensorSink)<br/>

execEnv.execute()

跟Table API相比,可以通过纯粹的SQL来做相应的操作

当前SQL不支持,windows aggregation,

但是Calcite的Streaming SQL是支持的,比如,

SELECT STREAM<br/> TUMBLE_END(time, INTERVAL '1' DAY) AS day,<br/> location AS room,<br/> AVG((tempF - 32) * 0.556) AS avgTempC<br/> FROM sensorData<br/> WHERE location LIKE 'room%'<br/> GROUP BY TUMBLE(time, INTERVAL '1' DAY), location

可以用Table API实现,

val avgRoomTemp: Table = tableEnv.ingest("sensorData")<br/>

.where('location.like("room%"))<br/>

.partitionBy('location)<br/>

.window(Tumbling every Days(1) on 'time as 'w)<br/>

.select('w.end, 'location, , (('tempF - 32) * 0.556).avg as 'avgTempCs)

What’s up next?

The Flink community is actively working on SQL support for the next minor version Flink 1.1.0. In the first version, SQL (and Table API) queries on streams will be limited to selection, filter, and union operators. Compared to Flink 1.0.0, the revised Table API will support many more scalar functions and be able to read tables from external sources and write them back to external sinks. A lot of work went into reworking the architecture of the Table API and integrating Apache Calcite.

In Flink 1.2.0, the feature set of SQL on streams will be significantly extended. Among other things, we plan to support different types of window aggregates and maybe also streaming joins. For this effort, we want to closely collaborate with the Apache Calcite community and help extending Calcite’s support for relational operations on streaming data when necessary.

转发申明:

本文转自互联网,由小站整理并发布,在于分享相关技术和知识。版权归原作者所有,如有侵权,请联系本站 top8488@163.com,将在24小时内删除。谢谢